UX Research Case Study

Metaquest VR

usability

benchmark.

UX research for a B2B wearable technology product at a Fortune 500 tech company — improving task success through behavioral insights.

UX Research Case Study

UX research for a B2B wearable technology product at a Fortune 500 tech company — improving task success through behavioral insights.

The Problem

Users were hitting walls during core interactions. Hand controls felt unintuitive, gesture-based inputs weren't landing, and navigating within an immersive environment tripped people up in ways that weren't obvious from the outside.

The Fortune 500 client needed to understand exactly where and why users were struggling — so they could make confident product decisions backed by real behavioral evidence rather than assumptions.

That was the tension at the center of the project: hardware that could be impressive and still feel impossible to use.

The Approach

My job on this study was to translate a vague "usability problem" into something the product and design teams could act on directly. That meant designing a repeatable benchmarking system, not a one-off report.

Ran 120+ moderated sessions to capture real-time behavioral data — task attempts, failure points, recovery strategies — rather than relying on self-reported experience alone.

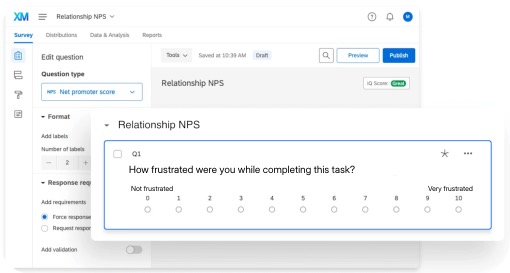

Paired each task with a post-task frustration rating and a Relationship NPS score, turning qualitative moments into something comparable across cohorts and releases.

Segmented results by age, familiarity with immersive tech, and physical dexterity — surfacing patterns that aggregate averages were hiding from stakeholders.

Built the rubric so every recommendation the team made afterward could be tied back to a specific, observed behavior — no more "gut call" product decisions in roadmap reviews.

In the Field

Sessions took place across a range of user profiles — first-timers, power users, accessibility-sensitive participants. Every session ran the same task script so behavioral data stayed comparable, while moderator notes captured the ad-hoc moments where friction actually lived.

The Pattern

A few patterns kept showing up across sessions, pointing to the same underlying issue.

Users failed tasks most often because of unintuitive hand-control interactions — not because they didn't understand the concept, but because they couldn't tell whether what they were doing was working.

Adults struggled significantly more than younger users to adapt to gesture-based controls. The learning curve wasn't just steep — it was steeper for certain groups in ways the product hadn't accounted for.

Small changes to control mapping and visual cues produced noticeable improvements in task success — a hopeful signal that the fixes didn't have to be massive to matter.

Physical interaction design in immersive products is load-bearing. Get it wrong and nothing else about the experience can compensate for it.

Outcome & Impact

The benchmarking study gave the product team a shared language for what "hard to use" actually meant — and a baseline they could measure future releases against.

Physical interaction design in immersive products is load-bearing. Get it wrong and nothing else about the experience can compensate.

What It Taught Me

This was my first time working at this scale — 120+ sessions, enterprise stakeholders, a product category most users had never touched before. It stretched my research skills in ways a smaller study wouldn't have.

I came out of it with a much sharper instinct for how to observe without interfering, how to spot a pattern early enough to make it useful, and how to translate messy behavioral data into something a product team can actually build from.

Behavioral research turns vague friction into a roadmap.